Discover the Onix blog

Search...

Trending Articles

Discover the Onix blog about software development and IT technologies to stay updated about the latest technology trends and get lots of tips from software development experts.

Give me the latest news!

Stay Informed and Inspired! Subscribe to Our Blog for the Latest in Tech Trends, Insights, and Innovations. Join the Conversation Today!

ML

Dive into an in-depth overview of machine learning technology that will bring solid competitiveness to your business and improve the user experience.

Travel

Discover the Onix travel technology blog to stay updated about travel and tourism technology trends and gets lots of useful tips about TravelTech software development.

VR/AR

Discover more on what's possible with augmented and virtual reality in business on Onix AR/VR technology blog.

How much does it cost to hire a dedicated team to build a top-tier solution?

Specify the number of experts and technologies you need and get an approximate cost for your project!

Whitepapers

Explore our extensive collection of insightful whitepapers covering a diverse range of topics.

FinTech

Discover the Onix FinTech blog to stay updated about financial and banking technology trends and gets lots of useful tips about fintech software development.

Sports & Fitness

Blog of a mobile and web application development company in the sports and fitness industry. Read articles about fitness and sports app development written by our experienced IT experts.

Yoga and meditation apps

VR/AR App Development

[ New article ]

Apr 18,2024

26 min read

750 views

VR-Based Meditation App Development [Onix’s Guide]

In this post, we discuss the applications of virtual reality for mental well-being and share Onix’s experience and tips on virtual reality meditation app development.

Nikola Makarevych

Healthcare

Discover the Onix healthcare information technology blog to stay updated about healthcare technology trends and gets lots of useful tips about HealthTech software development.

UI/UX Design

Explore our blog now and stay informed about the latest trends, best practices, and innovative ideas that will take your business to new heights.

Education

Discover the Onix education technology blog to stay updated about eLearning and online educational technology trends and gets lots of useful tips about EdTech software development.

Join us now and get your FREE copy of "Software Development Cost Estimation"!

This pricing guide is created to enhance transparency, empower you to make well-informed decisions, and alleviate any confusion associated with pricing. In this guide, you'll find:

﹂ Factors influencing pricing

﹂ Pricing by product

﹂ Pricing by engagement type

﹂ Price list for standard engagements

﹂ Customization options and pricing

Product Development

Explore our Product Development blog for the latest trends and valuable insights into crafting successful products.

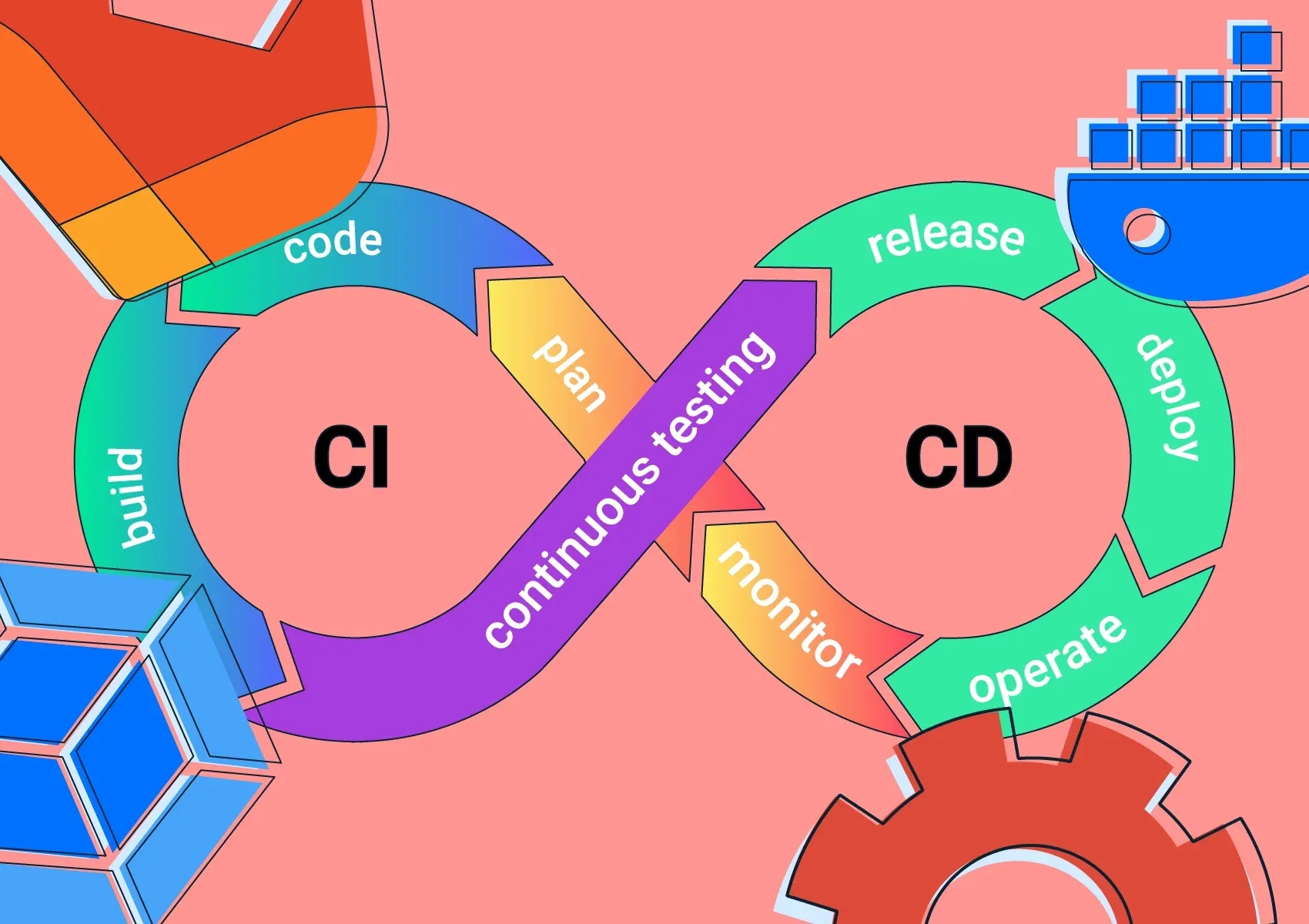

Technologies

Learn about current technology innovations from the latest Onix articles, and stay up-to-date on the latest solutions and their development details.

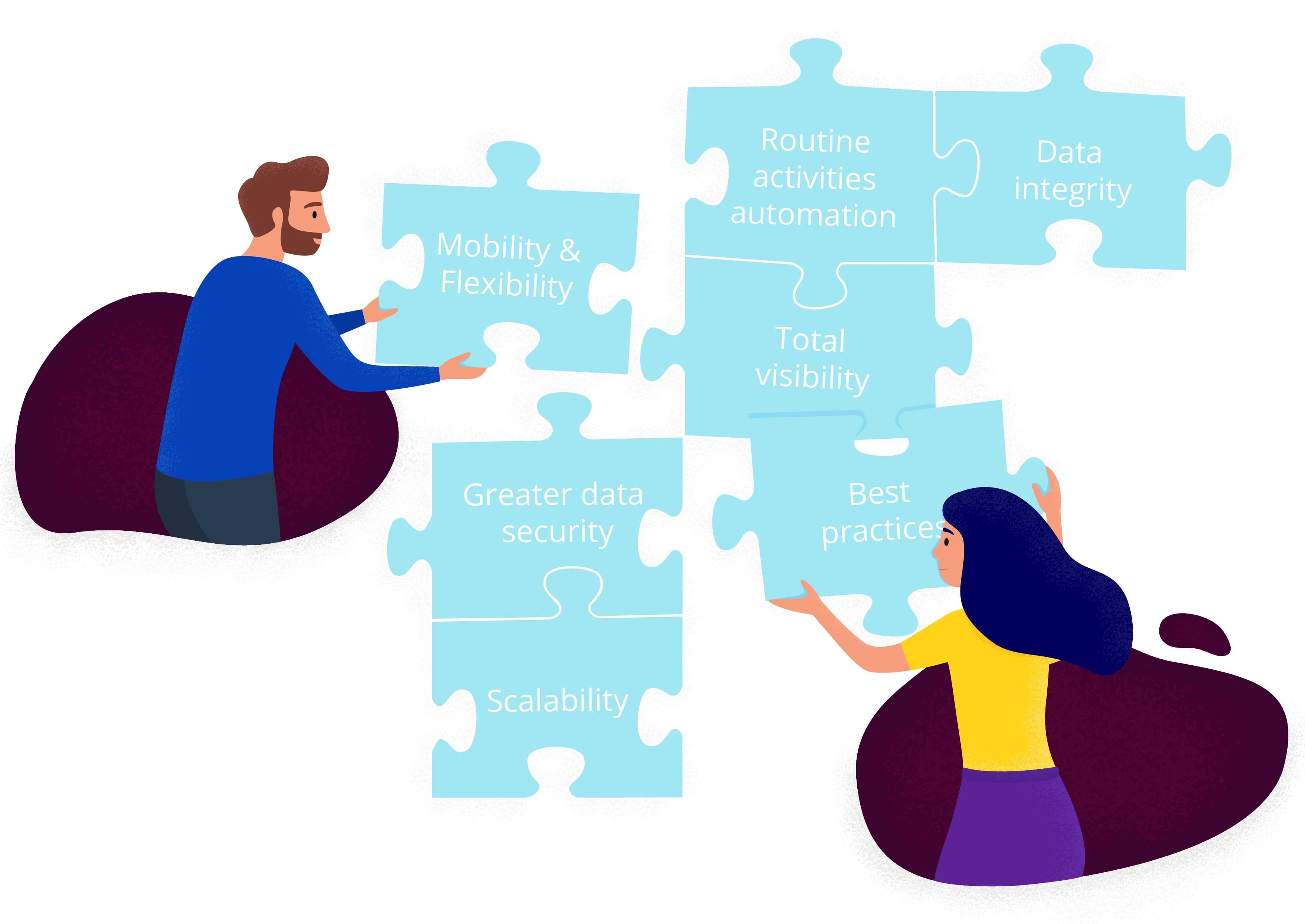

Salesforce

Innovation in the Salesforce industry to attract even more customers and increase revenue. All Salesforce trends and news are here.

Case studies

Our solutions boost clients' business efficiency and satisfy users’ expectations.

Mobile

Discover the Onix mobile app development blog to stay updated about mobile technology trends and gets lots of useful tips about mobile app development.

Web

On Onix web development blog we are going along with the web technology trends, sharing our knowledge and experience.

News

Stay informed with the latest news, company announcements, and updates from Onix-Systems through our comprehensive blog.